Sharing our Kamailio exporter for Prometheus

How to write a Prometheus exporter in Go

October 9, 2018

TL;DR

- The exporter is available here: https://github.com/florentchauveau/kamailio_exporter

- MIT licensed

- Exposes metrics from

tm.stats,sl.stats,core.shmmemanddispatcher.list - Contributions welcome

- We also shared our binrpc Go library

- Also, check out our FreeSWITCH exporter

A bit of history

A long time ago (in a g[…]y), we were using Nagios. Over time, Nagios did not scale as we wish it did. A single instance of Nagios was struggeling at fetching metrics from thousands of servers. Proper alerts was a pain to maintain.

Though Nagios is still being used in a lot of systems, it feels as a thing of the past. (Unless Perl becomes a thing again? Ask Javascript maybe).

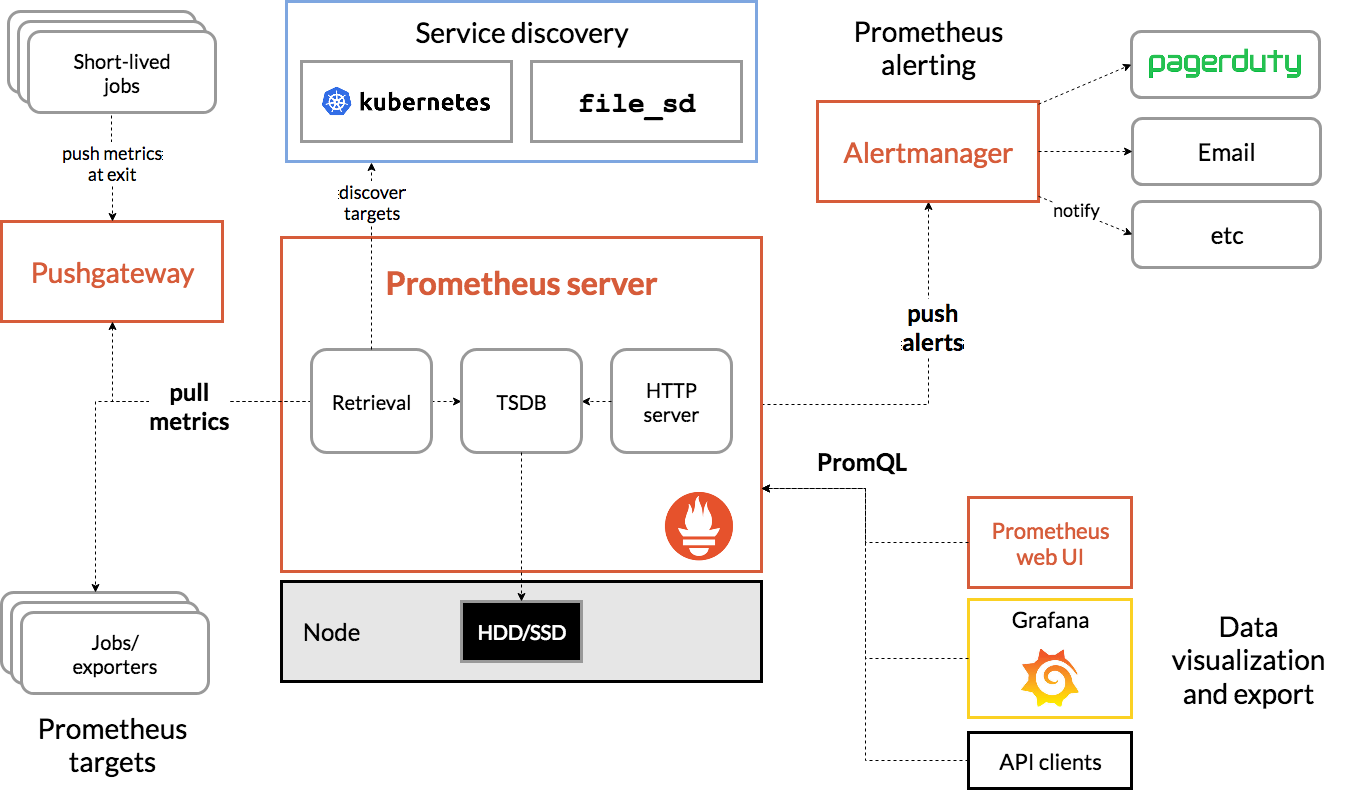

Here comes Prometheus, an open source systems monitoring and alerting.

Prometheus

Prometheus connects to targets and fetches metrics using simple HTTP GET commands. Prometheus stores them in its time-series database.

For metrics that cannot be pulled, a Push Gateway is provided: metrics pushed to it are stored locally, and the gateway acts as a target for Prometheus. Clever.

Original image: Prometheus Overview

The targets that Prometheus connects to are either:

- software that expose metrics directly (like the Docker Daemon or Ceph)

- exporters

When Prometheus connects to an exporter, the exporter pulls the metrics from the software or system it is targeting using whatever channel the software provides.

In our case, we wanted metrics from Kamailio, an open source SIP server that we are using as part of our SBC.

The Kamailio Exporter

Kamailio exposes RPC functions via its CTL module:

- by default, listens on UNIX socket

unix:/var/run/kamailio/kamailio_ctl - can listen on TCP or UDP

- talks a proprietary “BINRPC” protocol

The command-line tool kamcmd implements the BINRPC protocol to talk to Kamailio.

Over the years, Kamailio has added modules to facilitate RPCs, most notably XHTTP and JSONRPCS modules. However, we wanted our exporter to work with the most basic Kamailio instances without requiring specific modules or configurations.

BINRPC

Our first work began with writing a Go library to implement the BINRPC protocol.

The library is open source (MIT licensed) and available here: https://github.com/florentchauveau/go-kamailio-binrpc.

The BINRPC protocol is described in 66 lines of commented code in src/modules/ctl/binrpc.h of Kamailio. It is a binary protocol to encode/decode int, string, double, struct, array, and raw bytes. It has no notion of “method”, “functions” or “return values”. Simply type (un)marshalling.

Say you want to call the RPC function tm.stats: send the string “tm.stats” via BINRPC, and Kamailio will execute it. The response can contain multiple packets each carrying a type.

Our current Go implementation handles int, string and structs only.

Contributions are welcome!

Writing the exporter

Prometheus comes with handful Go libraries for exposing metrics.

Basically, the library handles HTTP requests (using the builtin Go HTTP server net/http) and replies with the appropriate format.

The library exposes functions and structs to store metrics.

Implementation

In Go, implementing a customer collector means satisfying the Collector interface by implementing the Describe and Collect methods:

type Collector interface {

Describe(chan<- *Desc)

Collect(chan<- Metric)

}

The prometheus library provides a helper function for implementing Describe:

func (c *Collector) Describe(ch chan<- *prometheus.Desc) {

prometheus.DescribeByCollect(c, ch)

}

Easy.

The Collect method is called everytime Prometheus connects to our exporter and ask for metrics.

Our implementation is quite simple:

func (c *Collector) Collect(ch chan<- prometheus.Metric) {

c.mutex.Lock()

defer c.mutex.Unlock()

err := c.scrape(ch)

if err != nil {

c.failedScrapes.Inc()

c.up.Set(0)

log.Println("[error]", err)

} else {

c.up.Set(1)

}

ch <- c.up

ch <- c.totalScrapes

ch <- c.failedScrapes

}

The method Collect can be called concurrently, so we need a mutex to make it concurrent-safe.

The prometheus library provides a channel ch to which we push the metrics we want to expose.

We have two kinds of metrics:

- the static ones:

kamailio_exporter_total_scrapes: total scrapeskamailio_exporter_failed_scrapes: number of failed scrapeskamailio_up: is kamailio responding?

- the dynamic ones: the metrics we fetch from Kamailio itself.

The static ones are created with NewGauge and NewCounter. Like this:

c.up = prometheus.NewGauge(prometheus.GaugeOpts{

Namespace: namespace,

Name: "up",

Help: "Was the last scrape successful.",

})

For the dynamic metrics, as the documentation says:

If you already have metrics available, created outside of the Prometheus context, you don’t need the interface of the various Metric types. You essentially want to mirror the existing numbers into Prometheus Metrics during collection. An own implementation of the Collector interface is perfect for that. You can create Metric instances “on the fly” using NewConstMetric, NewConstHistogram, and NewConstSummary (and their respective Must… versions). That will happen in the Collect method.

So we use NewConstMetric.

In our scrape method, you can find:

for _, metricValue := range metricValues {

metric, err := prometheus.NewConstMetric(

prometheus.NewDesc(metricDef.ExportedName(), metricDef.Help, metricValue.LabelKeys(), nil),

metricDef.Kind,

metricValue.Value,

metricValue.LabelValues()...,

)

if err != nil {

return err

}

ch <- metric

}

Metrics fetched from Kamailio are created on the fly, as recommended.

Port Allocation

We reserved the port 9494 which is the default port the exporter listens on (TCP).

CI/CD

The Prometheus ecosystem has defined a common way to build and release builds.

That is why we are using Makefile.common and promu. Coupled with CircleCI, the pipeline compiles for all the architectures configured, and automatically push binaries to the release tags.

In our CircleCI config, the build step is like this:

build:

machine: true

steps:

- checkout

- run: make promu

- run: promu crossbuild -v

- persist_to_workspace:

root: .

paths:

- .build

make promu is part of the Makefile.common, it downloads promu using go get.

Then, promu crossbuild, as the name implies, will crossbuild the project to the architectures defined in .promu.yml.

Then persist_to_workspace is used to persist the files built in the .build directory. This means that the files will be kept available for another job.

An example of the build job in action can be seen here.

The release_tags job configuration looks like this:

release_tags:

docker:

- image: circleci/golang:1.11

steps:

- checkout

- run: mkdir -v -p ${HOME}/bin

- run: curl -L 'https://github.com/aktau/github-release/releases/download/v0.7.2/linux-amd64-github-release.tar.bz2' | tar xvjf - --strip-components 3 -C ${HOME}/bin

- run: echo 'export PATH=${HOME}/bin:${PATH}' >> ${BASH_ENV}

- attach_workspace:

at: .

- run: make promu

- run: promu crossbuild tarballs

- run: promu checksum .tarballs

- run: promu release .tarballs

- store_artifacts:

path: .tarballs

destination: releases

The first step is about downloading aktau/github-release, a tool that promu relies on to push artifacts to github releases.

attach_workspace makes the .build directory that we created in the previous job available.

promu crossbuild tarballs will create .tar.gz files containing the binary + the LICENSE files, as specified in .promu.yml.

promu checksum .tarballs creates a sha256sums.txt file with sha256 sums of the .tar.gz files, like this one.

promu release .tarballs uploads the tarballs to the release specified in the VERSION file located at the root of the repository. Make sure the git tag AND the VERSION file match when you tag!

With this, everytime we tag a commit that matches /^v[0-9]+(\.[0-9]+){2}(-.+|[^-.]*)$/, CircleCI automatically crossbuilds and uploads the files to the github release. Neat.

An example of the release_tags job in action can be seen here.

Metrics

We implemented the following RPC methods:

tm.stats(statistics of the transaction module)sl.stats(statistics of the stateless module)core.shmmem(shared memory usage)core.uptime(uptime)dispatcher.list(dispatcher target status)

Each command can be enabled or disabled via the command-line.

Here is a sample of the exposed metrics for sl.stats:

# HELP kamailio_sl_stats_codes_total Per-code counters.

# TYPE kamailio_sl_stats_codes_total counter

kamailio_sl_stats_codes_total{code="200"} 1.089737e+06

kamailio_sl_stats_codes_total{code="202"} 0

kamailio_sl_stats_codes_total{code="2xx"} 0

kamailio_sl_stats_codes_total{code="300"} 0

kamailio_sl_stats_codes_total{code="301"} 0

kamailio_sl_stats_codes_total{code="302"} 0

kamailio_sl_stats_codes_total{code="400"} 4198

kamailio_sl_stats_codes_total{code="401"} 0

kamailio_sl_stats_codes_total{code="403"} 668081

kamailio_sl_stats_codes_total{code="404"} 0

kamailio_sl_stats_codes_total{code="407"} 0

kamailio_sl_stats_codes_total{code="408"} 0

kamailio_sl_stats_codes_total{code="483"} 143871

kamailio_sl_stats_codes_total{code="4xx"} 110445

kamailio_sl_stats_codes_total{code="500"} 0

kamailio_sl_stats_codes_total{code="5xx"} 0

kamailio_sl_stats_codes_total{code="6xx"} 0

kamailio_sl_stats_codes_total{code="xxx"} 0

Usage

The CTL module must be loaded by the Kamailio instance.

To run it:

./kamailio_exporter [flags]

Help on flags:

./kamailio_exporter --help

Flags:

--help Show context-sensitive help (also try --help-long

and --help-man).

-l, --web.listen-address=":9494"

Address to listen on for web interface and

telemetry.

--web.telemetry-path="/metrics"

Path under which to expose metrics.

-u, --kamailio.scrape-uri="unix:/var/run/kamailio/kamailio_ctl"

URI on which to scrape kamailio. E.g.

"unix:/var/run/kamailio/kamailio_ctl" or

"tcp://localhost:2049"

-m, --kamailio.methods="tm.stats,sl.stats,core.shmmem,core.uptime"

Comma-separated list of methods to call. E.g.

"tm.stats,sl.stats". Implemented:

tm.stats,sl.stats,core.shmmem,core.uptime,dispatcher.list

-t, --kamailio.timeout=5s Timeout for trying to get stats from kamailio.

Download

The exporter is available here: https://github.com/florentchauveau/kamailio_exporter.

Pre-compiled binaries are available in releases.

Further Reading

- Writing Exporters from Prometheus

- Writing Prometheus Exporters from Percona

- Prometheus Component Builds

- promu

- Kamailio CTL module